What Is MCP (Model Context Protocol) and How Can It Transform Your Business?

Quick Answer

Model Context Protocol (MCP) is an open standard created by Anthropic that lets AI models connect directly to your business tools — databases, CRMs, email, file systems, and APIs — through a universal interface. Instead of building custom integrations for every AI-to-tool connection, MCP provides a single protocol that works across all major AI platforms. With 18,000+ MCP servers available, 97 million monthly SDK downloads, and adoption by Anthropic, OpenAI, Google, and Microsoft, MCP is rapidly becoming the standard way businesses connect AI to their operations.

Key Answers

- What is MCP (Model Context Protocol)?

- MCP is an open-source protocol created by Anthropic in November 2024 that standardises how AI models connect to external tools and data sources. It works like a universal adapter — one protocol that lets any AI assistant access any business system.

- Why does MCP matter for business owners?

- MCP eliminates the need to build custom integrations between AI and each business tool. Organisations implementing MCP report 40-60% faster AI agent deployment times and significantly reduced engineering costs for maintaining AI-data connections.

- Which AI platforms support MCP?

- All major AI platforms now support MCP: Anthropic Claude, OpenAI ChatGPT, Google Gemini, Microsoft Copilot, and development tools like Cursor, Windsurf, and Zed. This universal adoption means MCP integrations work across any AI provider.

- How much does MCP implementation cost?

- Most MCP servers are open-source and free. Implementation costs range from zero for pre-built servers to $5,000-$20,000 for custom MCP servers that connect to proprietary business systems. The ROI comes from eliminating custom integration maintenance.

- What can businesses do with MCP today?

- Businesses use MCP to let AI agents query databases, read and write to CRMs, manage files, send emails, process invoices, monitor systems, and automate multi-step workflows across connected tools — all through natural language instructions.

Key Takeaways

- MCP has grown from an internal Anthropic experiment in November 2024 to an industry standard with 18,000+ available servers, 97 million monthly SDK downloads, and adoption by every major AI provider.

- Organisations implementing MCP report 40-60% faster AI agent deployment times because MCP eliminates the need to build and maintain custom integrations between AI models and business tools.

- Gartner predicts 40% of enterprise applications will include task-specific AI agents by the end of 2026 — and MCP is the protocol that makes those agents useful by connecting them to real business data.

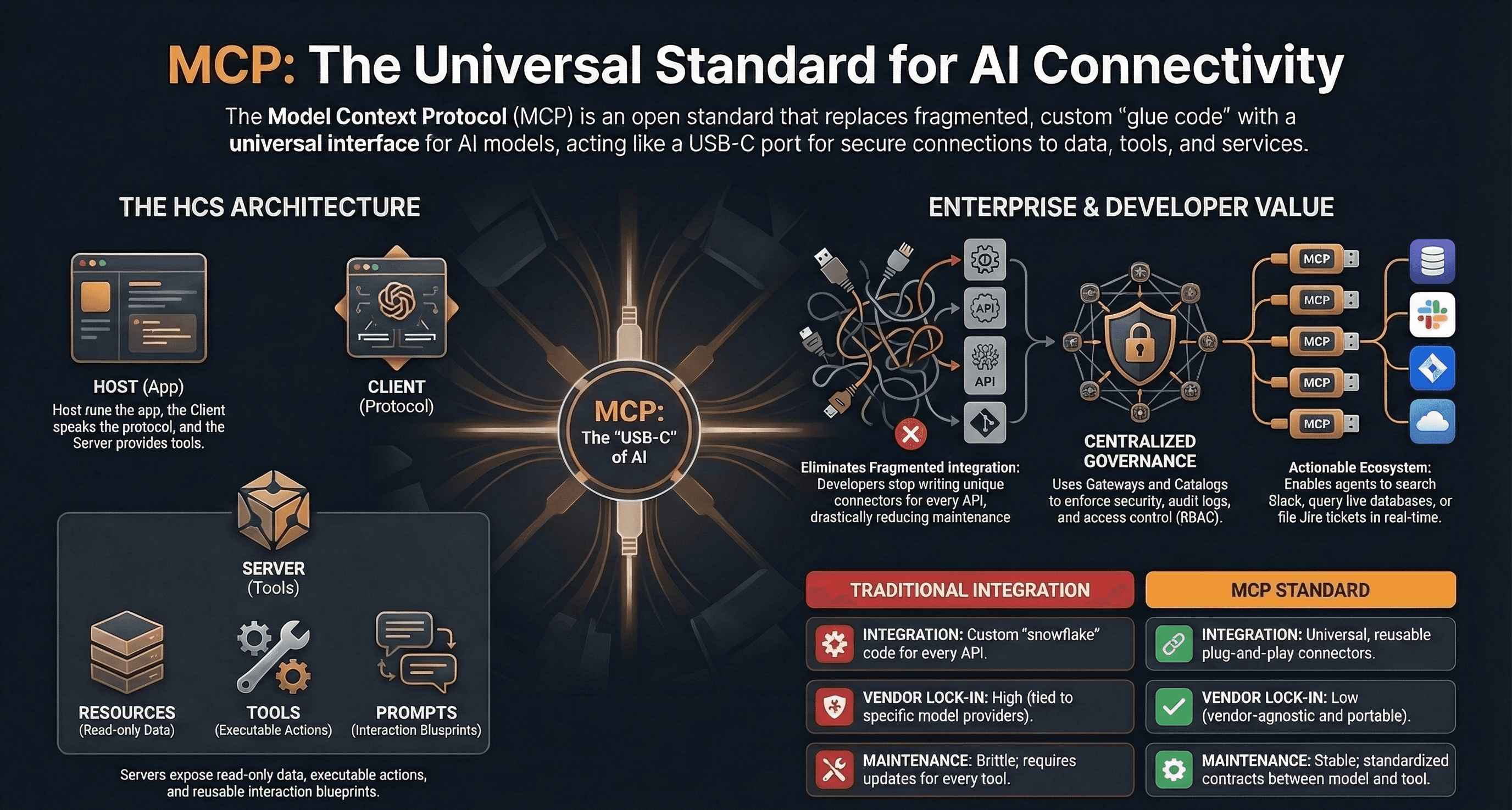

- MCP uses a client-server architecture where AI applications (clients) connect to lightweight servers that expose specific capabilities — databases, APIs, file systems — through a standardised interface.

- The biggest business impact of MCP is vendor independence: because MCP is an open standard, businesses can switch AI providers without rebuilding integrations, avoiding lock-in to any single platform.

What Is MCP (Model Context Protocol)?

MCP is an open-source protocol that standardises how AI models connect to external tools and data sources. Anthropic released it in November 2024, and every major AI provider adopted it within months.

Before MCP, connecting an AI assistant to your CRM required custom integration code. Connecting it to your database required different code. Email, file storage, project management — each one needed its own bespoke connector. MCP replaces all of that with a single, universal protocol. Build one MCP server for your system, and every AI model that supports MCP can access it immediately.

The analogy that keeps surfacing is USB-C. Before USB-C, every device needed a different cable. MCP does the same thing for AI — it gives every AI model a single, standardised way to plug into your business tools. The result: 18,000+ MCP servers are now available on public directories like MCP.so and PulseMCP, covering everything from Slack and Google Drive to PostgreSQL and Salesforce.

Why Does MCP Matter for Business Owners?

MCP matters because it eliminates the most expensive part of AI adoption: connecting AI to your actual business data. Without MCP, every AI integration is a custom engineering project. With MCP, it is a configuration step.

A 2025 Gartner survey found that 67% of enterprise technology leaders cite integration complexity as the top barrier to deploying AI agents. MCP directly addresses this. Organisations implementing MCP report 40-60% faster agent deployment times. That speed matters for SMBs — every week spent on integration code is a week your AI automation is not generating revenue.

Gartner also predicts that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% today. Those agents need to read your data, write to your systems, and trigger actions across your tool stack. MCP is the protocol that makes this possible without rebuilding integrations every time you change AI providers.

The vendor independence angle is equally important. Because MCP is an open standard supported by Anthropic, OpenAI, Google, and Microsoft, businesses avoid lock-in. Your MCP servers work the same whether your AI runs on Claude, GPT, or Gemini. Switch providers, and your integrations stay intact.

How Does MCP Work?

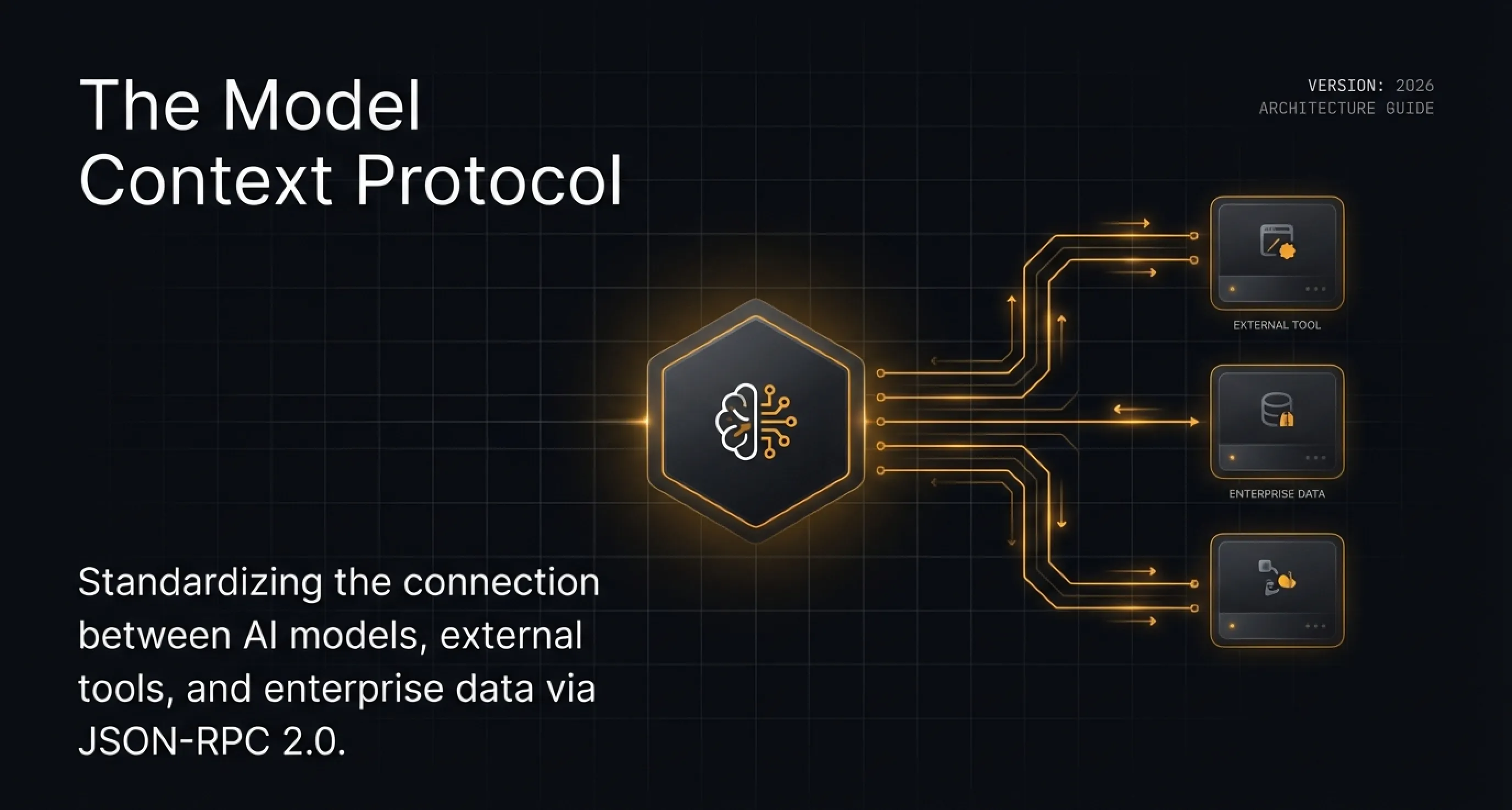

MCP uses a client-server architecture. AI applications (MCP clients) connect to lightweight MCP servers that expose specific capabilities — reading a database, querying an API, managing files — through a standardised JSON-RPC interface.

There are three core concepts. First, Tools — these are actions the AI can take, like "send an email" or "create a database record." Second, Resources — these are data the AI can read, like files, documents, or API responses. Third, Prompts — these are reusable templates that guide the AI on how to use the tools and resources effectively.

In practice, this means an AI assistant can ask an MCP server "what tools do you have?" and receive a structured list of available actions. The AI then decides which tools to use based on the user's request. This is the same sub-agent architecture behind AI employees — the AI orchestrates multiple tools to complete complex tasks autonomously.

MCP servers are lightweight programs — most are under 500 lines of code. They run locally on your machine or on a server, and they communicate with AI clients over standard transport protocols (stdio for local connections, HTTP with Server-Sent Events for remote). This simplicity is why the ecosystem grew to 18,000+ servers in just over a year.

How Does MCP Compare to Traditional API Integrations?

Traditional API integrations require custom code for every connection between two systems. MCP provides a universal interface that lets any AI model connect to any tool through a single protocol — replacing dozens of bespoke integrations with one standard.

The scaling problem with traditional integrations is multiplicative. If you have 5 AI models and 10 business tools, you need up to 50 custom integrations. MCP reduces this to 10 — one MCP server per tool, accessible by all AI models. This is the "M×N to M+N" reduction that makes MCP transformative for businesses running multiple AI tools.

Traditional integrations also break when APIs change. Every update to Salesforce's API, for example, requires updating every custom integration that touches it. With MCP, you update one server, and all connected AI models inherit the change. This is why organisations report significant reductions in engineering time dedicated to maintaining AI-data connections after adopting MCP.

What Can Businesses Build With MCP Today?

Businesses are using MCP to build AI agents that query databases, manage CRMs, process invoices, monitor systems, and automate multi-step workflows — all through natural language conversations with AI assistants.

The most common MCP use cases for SMBs fall into four categories. First, data access — letting AI agents read from and write to databases, spreadsheets, and file systems. Second, communication — connecting AI to email, Slack, and messaging platforms for automated outreach and triage. Third, workflow automation — chaining multiple tools together so an AI agent can, for example, read a support ticket, look up the customer in the CRM, draft a response, and log the interaction. Fourth, AI agents that handle business tasks autonomously — monitoring dashboards, escalating issues, and taking action without human intervention.

Real-world enterprise deployments include Block using MCP for internal developer tools, Bloomberg integrating MCP into financial data analysis workflows, and Microsoft embedding MCP support in Business Central for ERP-connected AI agents. For SMBs, the most impactful use cases are connecting AI to existing CRM and accounting systems — the tools where manual data entry and context switching consume the most hours.

Which Companies Have Adopted MCP?

MCP has been adopted by every major AI provider and hundreds of enterprise companies. Anthropic, OpenAI, Google, and Microsoft all support MCP natively in their AI platforms. Block, Bloomberg, Amazon, and hundreds of Fortune 500 companies have deployed MCP in production.

The adoption timeline tells the story. Anthropic released MCP in November 2024 as an open-source standard. By early 2025, OpenAI announced MCP support in ChatGPT and the Agents SDK. Google added MCP to Gemini. Microsoft integrated MCP into Copilot Studio and Business Central. Developer tools — Cursor, Windsurf, Zed, Replit — added MCP support in their AI coding assistants. Within 12 months, MCP went from a single company's experiment to the industry default.

The SDK download numbers confirm the scale: 97 million monthly downloads. MCP server downloads grew from approximately 100,000 in November 2024 to over 8 million by April 2025. The open-source ecosystem now includes 18,000+ servers on MCP.so, with PulseMCP tracking 8,600+ actively maintained servers across every major business tool category.

How Much Does MCP Implementation Cost?

Most MCP servers are open-source and free to use. Custom MCP servers for proprietary business systems typically cost $5,000-$20,000 to build and deploy. The primary ROI comes from eliminating the ongoing cost of maintaining custom integrations — which can save tens of thousands annually for businesses running multiple AI tools.

MCP implementation falls into three cost tiers. The first is free — connecting pre-built open-source MCP servers for common tools like Slack, GitHub, PostgreSQL, Google Drive, and Notion. These servers are maintained by the community and can be set up in hours. The second tier is low-cost ($1,000-$5,000) — adapting existing MCP servers or building simple custom servers for your specific business tools. The third tier is mid-range ($5,000-$20,000) — building production-grade MCP servers for proprietary systems, legacy databases, or complex business logic. This follows the same build-vs-buy decision framework that applies to all custom software.

The cost comparison that matters is MCP versus the alternative. Without MCP, connecting a single AI model to five business tools requires five separate custom integrations — typically $3,000-$10,000 each. Maintaining those integrations costs $500-$2,000 per year per integration as APIs change. MCP replaces that with five $1,000-$5,000 servers that work with every AI model and need minimal maintenance. Over three years, MCP saves 60-80% on integration costs for businesses using multiple AI tools.

What Are the Risks and Limitations of MCP?

MCP's main risks are security implementation, server quality variance, and the protocol's relative immaturity. The protocol itself does not enforce authentication or access controls — that responsibility falls on each server implementation.

Security is the biggest concern. MCP gives AI models direct access to business systems — databases, file systems, APIs. A poorly configured MCP server could expose sensitive data or allow unintended write operations. Enterprise deployments should use MCP gateways that enforce centralised authentication, rate limiting, and audit logging. This is the same security-first mindset that applies to all AI-generated code.

Server quality varies widely. The 18,000+ servers on public directories range from production-grade to experimental weekend projects. Businesses should prioritise servers from the official Model Context Protocol GitHub repository (maintained by Anthropic), established tool vendors, and servers with active maintenance and clear documentation. The Zuplo State of MCP report recommends treating community MCP servers with the same caution you would apply to any open-source dependency.

MCP is also still evolving. The specification continues to add features — streamable HTTP transport replaced the earlier SSE transport in 2025, and authentication standards are still being formalised. For most SMBs, this immaturity is manageable: start with well-established servers for common tools, and build custom servers incrementally as the protocol stabilises.

How Do You Get Started With MCP?

Start by connecting pre-built MCP servers to an AI assistant you already use — Claude Desktop, Cursor, or ChatGPT. Pick one business tool where AI access would save the most time, and connect it first.

The fastest path to MCP value is a four-step process. First, identify the business tool where your team spends the most time on manual data retrieval or entry — usually a CRM, database, or project management tool. Second, find a pre-built MCP server for that tool on the official GitHub repository or MCP.so. Third, configure the server in your AI client — Claude Code, Claude Desktop, and Cursor all support MCP configuration through a simple JSON file. Fourth, test it by asking your AI assistant to perform a task that requires the connected tool.

For businesses with proprietary systems — custom databases, internal APIs, or legacy software — the next step is building a custom MCP server. This is where a development partner adds the most value. A custom MCP server wraps your business system in the MCP standard, making it accessible to any AI model. Once built, it works with every AI platform that supports MCP, future-proofing the investment against AI provider changes.

What Is the Bottom Line?

MCP is the protocol that turns AI assistants from conversational tools into operational tools that connect to your business systems. With universal adoption by Anthropic, OpenAI, Google, and Microsoft, it is no longer a question of whether your business will use MCP — it is a question of when. Starting now with pre-built servers for your most-used tools is the lowest-risk, highest-impact first step.

The businesses that adopt MCP early gain a compounding advantage: every MCP server you build makes your AI agents more capable, every AI provider that adds MCP support makes your existing servers more valuable, and every tool vendor that publishes an MCP server expands what your AI can do. MCP is the infrastructure layer of the agentic development revolution — and for SMBs, the window to build that advantage is open now.

Research Data

Key strategies and factors based on original research

| integration approach | setup complexity | maintenance cost | flexibility | AI compatibility | best use case |

|---|---|---|---|---|---|

| Model Context Protocol (MCP) | Low | Modular | High | Native | Standardized 'USB-C' for AI; enables real-time data discovery and independent tool invocation by LLMs across various platforms. |

| Traditional API Integration (REST/gRPC) | High | Fragile | Low | Limited | Custom 'snowflake' solutions requiring hard-coded bridges, manual authentication handling, and predefined schemas for specific vendor tools. |

Original research by ManaTech

Frequently Asked Questions

Is MCP only for developers or can business owners use it?

MCP is a developer protocol, but business owners benefit directly. Pre-built MCP servers for tools like Slack, Google Drive, PostgreSQL, and Salesforce can be set up without writing code. For custom business systems, a developer builds the MCP server once, and then any AI assistant can use it.

How is MCP different from APIs?

APIs require custom code for every connection between two systems. MCP is a universal adapter — one protocol that lets any AI model connect to any tool. Think of it like the difference between needing a different charger for every device versus USB-C working with everything.

Is MCP secure enough for business data?

MCP supports authentication, access controls, and encrypted connections. However, security depends on implementation. Enterprise deployments should use MCP gateways that enforce centralised authentication, rate limiting, and audit logging. The protocol itself does not enforce security — the server implementation does.

Can MCP connect to legacy business systems?

Yes. MCP servers can be built to wrap any system with an API, database connection, or even screen scraping capability. If your legacy system has any programmatic access point, an MCP server can expose it to AI models. This is one of the key advantages for SMBs with older tech stacks.

Will MCP replace Zapier and Make.com?

Not directly. Zapier and Make.com automate predefined workflows between apps. MCP gives AI agents real-time access to tools so they can make decisions and take actions dynamically. MCP and workflow automation tools are complementary — MCP adds the AI intelligence layer that Zapier lacks.

How long does it take to implement MCP?

Pre-built MCP servers for popular tools (Slack, GitHub, databases) can be connected in hours. Custom MCP servers for proprietary business systems take 1-3 weeks to build. A full MCP-powered AI agent deployment typically takes 4-8 weeks from start to production.

Think You've Got It?

10 questions to test your understanding — instant feedback on every answer

Question 1 of 10

Which component of the Model Context Protocol (MCP) architecture is responsible for managing connections, security, and launching the underlying processes?

Question 2 of 10

How do 'Agent Skills' primarily differ from 'MCP' servers in their technical implementation?

Question 3 of 10

What is the primary function of 'Resources' within an MCP server configuration?

Question 4 of 10

In the context of MCP transport mechanisms, what is the default communication method for servers running locally on the same machine?

Question 5 of 10

According to the Red Hat presentation, what is the purpose of the 'MCP Registry' in an enterprise environment?

Question 6 of 10

What does 'Dynamic Tool Mode' allow for in Microsoft Dynamics 365 Business Central's MCP implementation?

Question 7 of 10

Which messaging standard does MCP use for the requests and responses exchanged between clients and servers?

Question 8 of 10

Why is the 'Docker MCP Gateway' considered a 'superpower' for developers using multiple tools?

Question 9 of 10

According to 'The 2026 Guide', what major evolution is expected for the Model Context Protocol in 2026?

Question 10 of 10

In the comparison between 'Stateful' and 'Stateless' transport, why is streamable HTTP often preferred over SSE in modern networking?

Related Content

Your Website Is Invisible to AI Search — Here Is How to Fix It

AI search engines like ChatGPT, Perplexity, and Gemini cannot read most business websites. If your site relies on client-side JavaScript rendering, you are invisible to the fastest-growing traffic source on the web.

Read more →blogAI Search Sends Businesses 10x More Revenue Per Click

AI-driven traffic accounts for less than 1% of visits but generates up to 11.4% of revenue. The data shows that every AI click is worth dramatically more than a traditional search click.

Read more →Want to explore this topic further?

Book a free discovery call to discuss how ManaTech can help your business implement these ideas.

Book a Discovery Call