The Rise of Agentic Development: How AI Agents Are Replacing Development Teams

Quick Answer

Agentic development is the shift from writing code to orchestrating teams of AI agents, enabling one developer to manage hundreds of autonomous agents working in parallel. This delivers custom software 3-5x faster at 70-80% lower cost, with projects that previously cost $80,000-$150,000 now deliverable at $15,000-$40,000.

Key Answers

- What is agentic development?

- Agentic development is a software development approach where one developer orchestrates teams of autonomous AI agents working in parallel, rather than writing code line by line.

- How much faster is agentic development?

- Projects that previously required 3-6 months with a full development team can now be delivered in 2-6 weeks at 70-80% lower cost through agentic development.

- How does the Agent Manager model work?

- The Agent Manager decomposes a project into parallel workstreams and assigns each to a specialised agent. One builds the database, another the API, another the frontend, and another writes tests.

- How does autonomous browser testing work?

- Testing agents launch applications in real browsers, navigate workflows like human users, fill out forms, click buttons, and record video proof of functionality as an auditable trail.

- How are artifacts replacing traditional code review?

- Artifacts are structured documents describing what was built, architectural decisions, and test results. Stakeholders can read, comment on, and approve them in plain language like a Google Doc.

Key Takeaways

- The Agent Manager model decomposes projects into parallel workstreams, assigning specialised agents to database, API, frontend, and testing tasks simultaneously in sandboxed environments.

- Autonomous browser testing agents launch applications in real browsers, navigate workflows like human users, and record video proof of functionality, replacing traditional QA cycles.

- Artifacts are structured documents replacing traditional code review, letting non-technical stakeholders read, comment on, and approve architectural decisions in plain language.

- Custom software that previously cost $80,000-$150,000 over six months can now be delivered at $15,000-$40,000 over 2-6 weeks through agentic development.

- Anthropic reports that Claude now writes approximately 70% of its own code, demonstrating that the transition from manual coding to AI agent orchestration is already well underway.

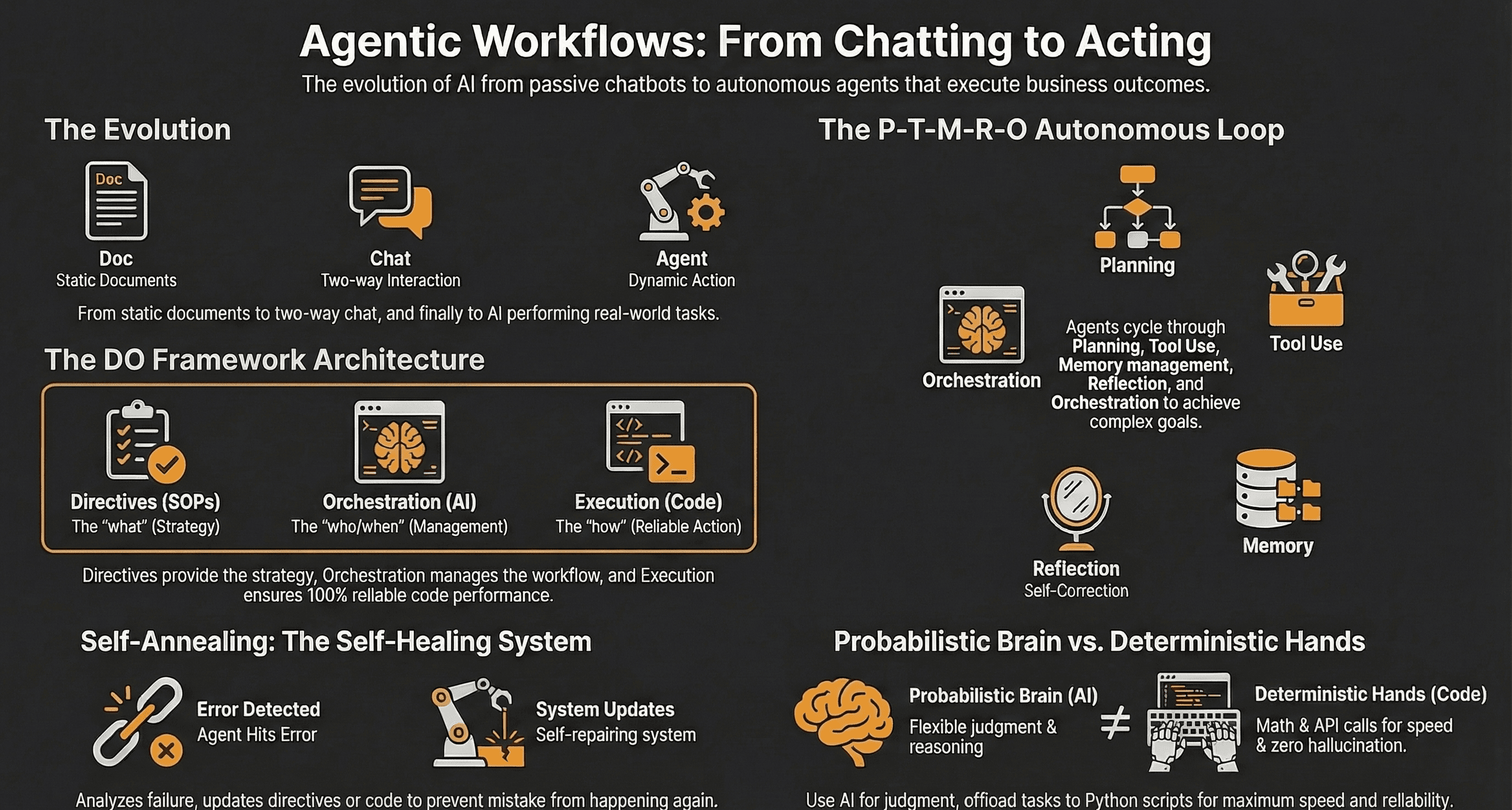

How Is Software Development Shifting From Coding to Orchestrating?

The developer role is evolving from writing code line by line to orchestrating teams of AI agents. One developer can now manage hundreds of autonomous agents working in parallel. Anthropic reports that Claude writes approximately 70% of its own code.

Software development is undergoing its most fundamental shift since the invention of high-level programming languages. The role of the developer is evolving from someone who writes code line by line to someone who orchestrates teams of AI agents. In this new model, a single developer can manage tens to hundreds of autonomous agents working in parallel. Each handles a different aspect of the application. The developer defines the architecture, sets the constraints, reviews the output, and makes the judgment calls that require human context. The agents handle the implementation. This is not speculative. Tools like Google AntiGravity, Cursor with background agents, and Claude Code with multi-agent orchestration are shipping today. Anthropic reports that Claude now writes approximately 70% of its own code. The transition is happening faster than the industry predicted.

How Does the Agent Manager Model Work?

The Agent Manager decomposes a project into parallel workstreams and assigns each to a specialised agent in its own sandboxed environment. It coordinates all agents, resolves merge conflicts, and enforces coding standards across the project.

The Agent Manager is the core innovation driving agentic development. Instead of a single AI assistant working on one task at a time, the Agent Manager decomposes a project into parallel workstreams. It assigns each to a specialised agent. One agent builds the database layer while another constructs the API. Another creates frontend components. Another writes integration tests. Each agent operates in its own sandboxed environment with access only to the files and context it needs. The Agent Manager handles coordination. It resolves merge conflicts, enforces coding standards, manages dependencies between components, and ensures that the work of 50 agents assembles into a coherent application. Google AntiGravity demonstrated running hundreds of agents simultaneously on a single project. What previously required a team of 8-12 developers working for three months can now be accomplished by one orchestrator managing AI agents over the course of a day.

How Does Autonomous Browser Testing Work?

Autonomous testing agents launch applications in real browsers, navigate through user workflows, fill out forms, click buttons, and record video proof of every test run. They catch visual regressions and broken user flows that unit tests miss.

One of the most significant breakthroughs in agentic development is autonomous browser testing. After agents build a feature, testing agents launch the application in a real browser. They navigate through user workflows, fill out forms, click buttons, and verify that the output matches the specification. They record video proof of every test run, creating an auditable trail of functionality. This is not unit testing or integration testing in the traditional sense. These are end-to-end tests performed by AI agents that interact with the application exactly as a human user would. They catch visual regressions, broken user flows, and edge cases that unit tests miss. The testing agents can also simulate different user roles, screen sizes, and network conditions. For businesses commissioning custom software, this means receiving not just a working application but video evidence that every specified workflow functions correctly. This dramatically reduces the back-and-forth of traditional quality assurance cycles.

How Are Artifacts Replacing Traditional Code Review?

Artifacts are structured documents that describe what was built, the reasoning behind architectural decisions, test results, and known limitations. Stakeholders can comment on and approve them in plain language, like a Google Doc.

Traditional code review requires reading through hundreds or thousands of lines of code to verify correctness. Agentic development introduces artifacts. These are structured documents that describe what was built, the reasoning behind architectural decisions, test results, and known limitations. Artifacts are living documents that stakeholders can comment on and revise, similar to collaborating on a Google Doc. Instead of reviewing code you may not understand, you review a plain-language description of what the system does. You verify that it matches your requirements. This fundamentally changes the client-developer relationship. Business owners no longer need to trust blindly that the code works. They can read, comment on, and approve the architectural decisions in language they understand. Artifacts also create a knowledge base that persists across the project lifecycle. This makes maintenance and future development significantly easier.

What Does Agentic Development Mean for Project Costs and Timelines?

Custom software that previously cost $80,000-$150,000 over six months can now be delivered at $15,000-$40,000 over 2-6 weeks. This is a structural shift in the economics of custom software, not a marginal improvement.

The business impact of agentic development is straightforward. Projects that previously required 3-6 months and a team of developers can now be delivered in 2-6 weeks by a smaller team orchestrating AI agents. This is not a marginal improvement. It is a structural shift in the economics of custom software. For SMBs doing $500k to $5M in revenue, this changes the calculus entirely. Custom software that was previously cost-prohibitive at $80,000-$150,000 over six months can now be delivered at $15,000-$40,000 over a few weeks. The quality ceiling has risen as well. AI agents produce more consistent code, more comprehensive test coverage, and more thorough documentation than most human development teams. Custom business applications, tools precisely tailored to your workflows, your data, and your competitive advantage, are now accessible to the exact segment of growing businesses that need them most.

What Is the Bottom Line?

Agentic development has made custom business software 3-5x faster and 70-80% cheaper to deliver. The businesses that recognise this shift and act on it now will have a compounding advantage over competitors still building software the old way.

Four predictions for the next 12-18 months. First, the coder-to-orchestrator shift will accelerate. The most valuable technical skill will not be writing code but designing systems and directing AI agents to build them. This is why vibe coding alone falls short. Second, artifacts will replace traditional code review for non-technical stakeholders. This makes software development more collaborative and transparent. Third, long-running autonomous agents will handle maintenance, monitoring, and incremental improvements without human intervention. This reduces the ongoing cost of software ownership. Fourth, visual logic layers will emerge that let business owners modify application behavior through flowchart-style interfaces rather than code changes. ManaTech already operates this way. We use agentic development to deliver custom business applications at speeds and price points that were impossible 18 months ago. The businesses that recognise this shift and act on it now will have a compounding advantage over competitors still building software the old way.

Research Data

Key strategies and factors based on original research

| Key statistics | Metrics | Comparisons | Data points |

|---|---|---|---|

| Software Engineering Benchmark | 80% score on SWE-bench Verified | AI performance vs. mid-level developers | Models solve 800 out of 1000 novel coding problems perfectly; human comparison suggests a mid-level dev might solve 50% in a year. |

| Token Efficiency (Sub-agents) | 90% performance increase | Single agent vs. Sub-agent architecture | Anthropic tests found Opus with sub-agents outperformed single-agent Opus by over 90% on research tasks. |

| Compound Success Rates | 59% total success rate | Single-step 90% vs. 5-step process | Five steps at 90% individual success equals 59% total; 10 steps equals 35%; 20 steps equals 12%. |

| Horizontal Leverage | 9000 units of economic value | Automating 100% of 1 role vs. 90% of 10,000 roles | Automating 90% of 10,000 roles provides 9,000 units of value compared to 1 unit for 100% of one role. |

| Cost of Intelligence | 40% price reduction | Claude Opus 4.5 vs. GPT-3 (2020) | Cost dropped from 15 USD/75 USD per 1M tokens to 5 USD/25 USD; overall intelligence cost plummeted 40% recently. |

| Operational Speed | 15 seconds vs. 20 minutes | Agentic workflow vs. Manual human process | A meal prep search/email task took under 15 seconds via agent vs. 20 minutes manually. |

| Deterministic vs. Probabilistic Speed | 53 milliseconds vs. 30 seconds | Python script (deterministic) vs. Raw LLM (probabilistic) | Sorting a list takes 53ms via script vs. approximately 30 seconds for native LLM intelligence (several hundred times faster). |

| Business ROI | 160,000 USD monthly revenue | Traditional agency vs. AI-based service agency | Nick Saraev built two AI-based service agencies to 160,000 USD in combined monthly revenue using these workflows. |

| AI Agent Market Growth | 43% CAGR | 2025 projection vs. 2030 projection | Market value is projected to grow from 8 billion USD in 2025 to a 48-52 billion USD range by 2030. |

| Deloitte Projection | Enterprise adoption rate | Current pilots vs. 2027 deployment | 25% of enterprises using GenAI will deploy agentic pilots in 2025, jumping to 50% by 2027. |

| Traditional vs. Agentic Workflows | Operational logic | Deterministic (Old) vs. Probabilistic (New) | Old: Manual node configuration and debugging. New: User defines outcome in natural language; agent handles self-healing and tool research. |

| Automation Speed | Time to build | Traditional low-code (n8n) vs. Agentic (Claude Code) | A lead generation automation took minutes to build with agents versus at least 1 hour manually in n8n. |

| Traditional Automation Completion | 60% to 70% success rate | Automated builders vs. Production-ready needs | Previous generation AI builders (natural language to workflow) usually get users 60% or 70% of the way toward a completed workflow. |

| Agent Longevity Benchmarks | Success rate over time | Short-term sprinting vs. Long-running projects | Vending Bench shows reasoning models degrade over time, forgetting orders and mistracking inventory in long simulations. |

| A2A Protocol Support | Ecosystem adoption | Proprietary vs. Open Standard | Google Cloud launched A2A in April 2025 with support from Salesforce, SAP, ServiceNow, Workday, and 50+ partners. |

| AI Code Generation | 30% to 40% of new code | AI-written vs. Human-written code | AI is already writing 30% to 40% of new code at major tech companies. |

| Anthropic Compiler Project | 20,000 USD development cost | Human dev team cost vs. Agent team API cost | Building a C compiler from scratch cost 20,000 USD in API fees using 16 agents; human dev teams would cost hundreds of thousands. |

| Agent Teams vs. Sub-agents | Token Efficiency and Coordination | Isolation vs. Collaboration | Agent teams use 2 to 4 times more tokens than sub-agents but offer peer-to-peer coordination; sub-agents are better for isolated research. |

Original research by ManaTech

Frequently Asked Questions

What is agentic development?

Agentic development is a software development approach where a single developer orchestrates teams of autonomous AI agents working in parallel, rather than writing code line by line. The developer defines architecture and constraints, reviews output, and makes judgment calls while agents handle implementation across database, API, frontend, and testing workstreams.

How much faster is agentic development than traditional development?

Projects that previously required 3-6 months with a full development team can now be delivered in 2-6 weeks. Custom software that cost $80,000-$150,000 can now be delivered at $15,000-$40,000. This is a structural shift in the economics of custom software, not a marginal improvement.

Does agentic development produce lower quality software?

AI agents actually produce more consistent code, more comprehensive test coverage, and more thorough documentation than most human development teams. Autonomous browser testing creates video proof that every specified workflow functions correctly, and artifacts provide an auditable trail of architectural decisions.

What is the future of agentic development?

Four key predictions for the next 12-18 months: the coder-to-orchestrator shift will accelerate, artifacts will replace traditional code review for non-technical stakeholders, long-running autonomous agents will handle maintenance and monitoring, and visual logic layers will let business owners modify application behavior through flowchart-style interfaces.

How does agentic development reduce custom software costs for SMBs?

By replacing teams of 8-12 developers with one orchestrator managing AI agents, agentic development reduces labor costs by 70-80%. Custom business applications precisely tailored to your workflows are now accessible to businesses doing $500k-$5M in revenue, a segment previously priced out of custom software.

Think You've Got It?

10 questions to test your understanding — instant feedback on every answer

Question 1 of 10

In the P TMRO loop described for agentic workflows, what does the 'R' represent?

Question 2 of 10

According to the DO framework, where should a company's Standard Operating Procedures (SOPs) be stored?

Question 3 of 10

Why is it recommended to move mathematical operations from the LLM to the Execution layer in an agentic workflow?

Question 4 of 10

If an agent has a 90% success rate at each step, what is the approximate compound success rate over a 5-step process?

Question 5 of 10

What is the primary function of 'self-annealing' in an agentic workflow?

Question 6 of 10

What is a significant disadvantage of using too many Model Context Protocol (MCP) servers simultaneously?

Question 7 of 10

How do 'Agent Teams' in Claude Code differ from standard 'Sub-agents'?

Question 8 of 10

What is 'Contract-First Spawning' in the context of agent teams?

Question 9 of 10

Why does the 'Spec-Driven Development' approach suggest storing specifications as local files?

Question 10 of 10

In the context of the DO framework, what is an 'Umbrella' or 'Meta-directive'?

Related Content

Your Website Is Invisible to AI Search — Here Is How to Fix It

AI search engines like ChatGPT, Perplexity, and Gemini cannot read most business websites. If your site relies on client-side JavaScript rendering, you are invisible to the fastest-growing traffic source on the web.

Read more →blogAI Search Sends Businesses 10x More Revenue Per Click

AI-driven traffic accounts for less than 1% of visits but generates up to 11.4% of revenue. The data shows that every AI click is worth dramatically more than a traditional search click.

Read more →Want to explore this topic further?

Book a free discovery call to discuss how ManaTech can help your business implement these ideas.

Book a Discovery Call