How to Choose an AI Implementation Partner in NZ

Quick Answer

Choose an AI implementation partner in NZ by testing whether they understand your operating workflow before they recommend technology. The right partner can map the bottleneck, redesign the process, integrate AI into your existing systems, define responsible AI controls, and measure ROI after launch. The wrong partner sells a model, chatbot, or automation package before they understand how your business actually works.

Key Answers

- What should an AI implementation partner do first?

- They should map the business workflow, data sources, decision points, handoffs, risks, and measurable outcome before recommending any model, platform, or agent. Discovery comes before architecture.

- What is the biggest red flag when choosing an AI partner?

- The biggest red flag is a partner who starts with a tool demo instead of the business bottleneck. AI fails when it is layered on top of a broken workflow rather than built into a redesigned operating process.

- Does a NZ business need an AI consultant or a custom builder?

- If the work touches your core operations, you usually need a builder, not just a consultant. Strategy is useful, but production value comes from integration, governance, workflow change, and ongoing support.

- How should ROI be measured on an AI implementation?

- Measure workflow outcomes: time saved, errors reduced, revenue unlocked, labour avoided, cycle time reduced, and adoption by the team. Prompt counts and seat usage are not business ROI.

- What responsible AI questions should NZ businesses ask?

- Ask how the partner handles privacy, accountability, human oversight, data access, monitoring, risk escalation, and compliance with relevant NZ obligations. MBIE guidance makes responsible AI a business practice, not a legal afterthought.

Key Takeaways

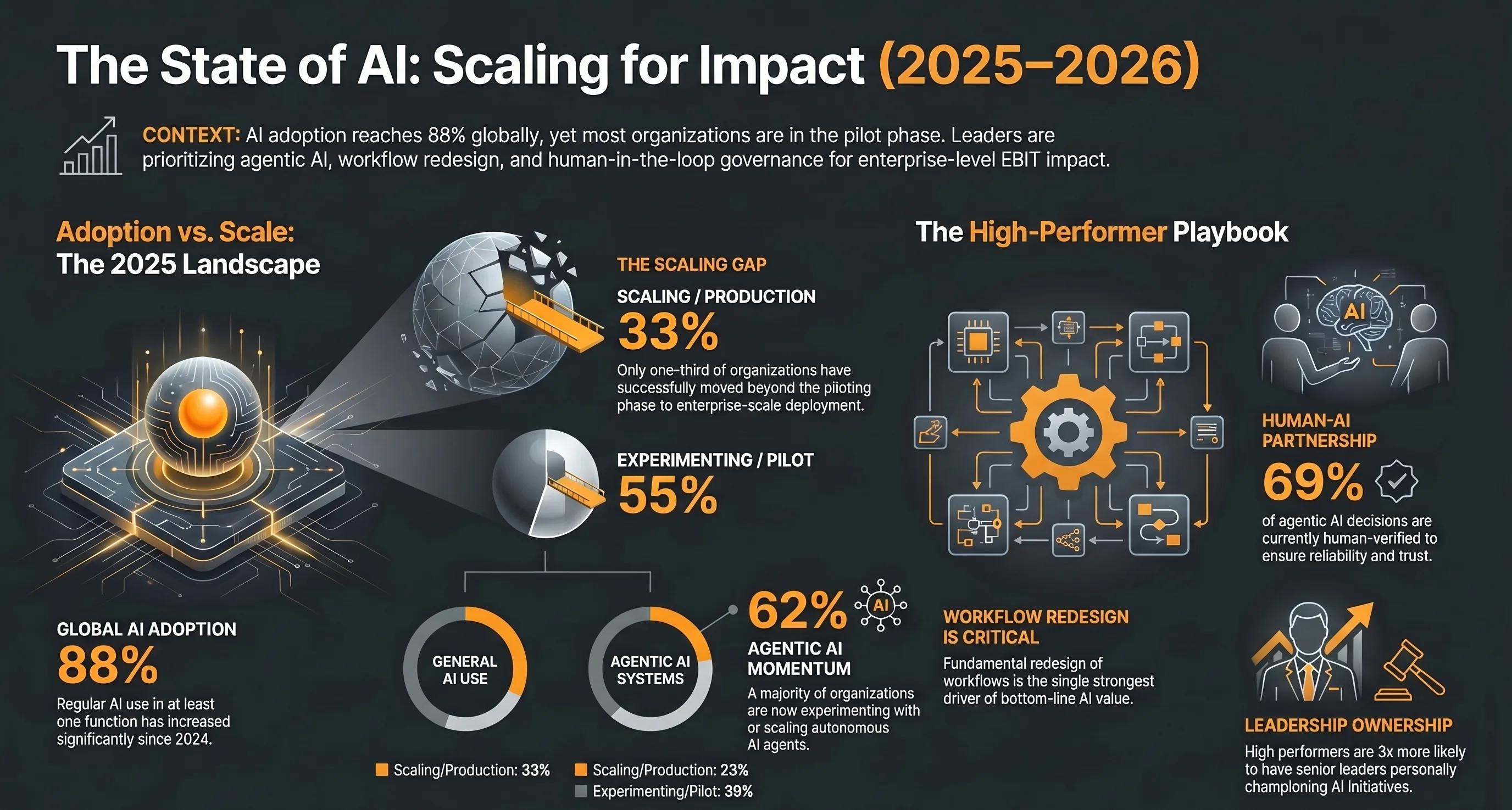

- McKinsey reports that most organisations are still experimenting or piloting AI, while high performers capture value by redesigning workflows and tracking business outcomes.

- New Zealand AI policy is adoption-led: MBIE wants businesses to use AI confidently, responsibly, and in ways that lift productivity.

- A strong AI implementation partner starts with business logic, not a platform preference; they should be able to explain the workflow they will change.

- Production AI needs integration, monitoring, human review, and support after launch; a demo is not an operating system.

- The best first AI project is a high-friction workflow with clear volume, available data, defined ownership, and a measurable cost or revenue lever.

What Should an AI Implementation Partner Actually Do?

An AI implementation partner should turn a business bottleneck into a working operating system. The work starts with discovery, then moves through workflow design, integration, governance, delivery, and ongoing support.

The phrase "AI implementation" gets used loosely. Some vendors mean a ChatGPT workshop. Some mean a platform licence. Some mean a dashboard with an AI label. None of those are enough when the system is expected to run part of the business. For a NZ business, implementation means the AI is embedded into the workflow where work already happens: the CRM, inbox, job system, Xero file, booking platform, spreadsheet, portal, or internal approval process.

McKinsey research on AI value capture makes the same point from the enterprise side. The organisations seeing material AI impact are not just buying tools. They are redesigning workflows, assigning senior ownership, and tracking outcomes. That pattern applies just as strongly to a 20-person NZ operator as it does to a global enterprise. The scale changes. The discipline does not.

Why Do So Many AI Projects Stall After the Demo?

AI projects stall because the demo proves the model can respond, not that the business can operate differently. Production requires data access, workflow ownership, monitoring, governance, and team adoption.

A demo is a controlled environment. It uses clean prompts, a narrow scenario, and a person who knows what result they want. A business workflow is different. The data is messy. The process has exceptions. The customer does not ask the question neatly. The staff member has three other systems open. The finance team needs an audit trail. The owner needs to know who is accountable when the system is wrong.

That is why AI implementation needs an ROI model before it needs a model choice. If the project cannot be tied to time saved, error reduction, faster cycle time, lower labour cost, better conversion, or higher service capacity, it is not ready to build. For the measurement layer, use the same discipline outlined in our guide to measuring AI automation ROI: define the baseline first, then measure the changed workflow after launch.

What Questions Should You Ask Before Hiring an AI Partner?

Ask questions that force the partner to explain how value will be built, not just what technology will be used. The strongest answers connect workflow, integration, risk, support, and ROI.

Start with discovery: "How will you understand our workflow before proposing a solution?" Then integration: "Which systems will this connect to, and what happens if those systems have no clean API?" Then governance: "Where does human review happen, and who is accountable for errors?" Then support: "What happens after go-live when the process changes or the team finds edge cases?" Finally, ROI: "Which metric will prove this worked?"

A serious partner should answer those questions without retreating into jargon. They should be able to describe the future workflow in plain language: what triggers the system, what data it reads, what decision it makes, when a human checks it, where the output goes, and how success is measured. If they cannot describe that chain, they have not designed the implementation yet.

How Should NZ Businesses Think About Responsible AI?

Responsible AI for a NZ business means clear purpose, privacy protection, human oversight, risk management, transparency, and ongoing monitoring. It should be built into the implementation, not bolted on later.

MBIEs Responsible AI Guidance for Businesses frames AI governance as a practical operating discipline. Businesses need to understand why they are using AI, which risks the system introduces, how those risks are managed, and whether existing business controls are still fit for purpose. That matters for small businesses as much as large ones because AI often touches customer data, staff decisions, intellectual property, and service quality.

The partner you choose should be able to translate responsible AI into design choices. That means permissioned data access, logs, approval steps, escalation paths, prompt and tool controls, secure deployment, and a clear distinction between what the AI can do alone and what a person must approve. A vague promise that "the AI is safe" is not governance.

What Are the Red Flags in an AI Implementation Proposal?

The red flags are tool-first selling, vague ROI, no integration plan, no human review design, no support model, and no explanation of what changes in the actual workflow.

Be careful with any proposal that names a model before it names the bottleneck. Be careful with fixed packages that do not ask how your work currently moves. Be careful with claims of full autonomy in areas where the cost of an error is high. Be careful with partners who say integration can happen later. If the AI sits beside the workflow instead of inside it, staff will eventually abandon it.

Another red flag is a partner who treats custom build and off-the-shelf software as the same decision. They are not. Off-the-shelf tools are useful when the workflow is generic. Custom implementation is justified when the workflow is specific, valuable, and hard to force into someone elses product. That is the same logic behind our build vs buy framework for deciding when SaaS has stopped paying off.

What Should the First AI Project Be?

The first AI project should be a repeatable, painful workflow with clear volume, available data, measurable cost, and a defined owner. Start where the business already feels the bottleneck.

Good first projects include invoice reconciliation, report generation, sales follow-up, client onboarding, job scheduling, quoting, compliance documentation, support triage, and internal knowledge retrieval. These workflows have repeatable inputs, clear outputs, and a visible cost when the work is done manually. They also create the foundation for larger AI systems because they expose the data, approvals, and integrations the business already depends on.

Do not start with the most glamorous agent. Start with the workflow where the business can see value inside one quarter. The pattern is similar to the AI quick wins framework: prove one operational improvement, then stack the next system on top of it.

What Is the Bottom Line?

Choose the AI implementation partner who can architect the operating system, not just demonstrate the technology. The right partner starts with your workflow and ends with measurable business leverage.

AI implementation is not a software shopping exercise. It is a business architecture decision. The partner you choose should understand the current workflow, design the future workflow, build the integrations, define the human controls, measure the outcome, and stay close enough after launch to improve the system as reality changes. That is how AI moves from experiment to infrastructure.

Research Data

Key strategies and factors based on original research

| Evaluation Area | What Strong Looks Like | Why It Matters | Weak Signal | Source Theme | |||||

|---|---|---|---|---|---|---|---|---|---|

| Business discovery | Maps the operating model before proposing tools or agents | AI value depends on the workflow being redesigned around a real business constraint | Starts with a model or platform demo before understanding the process | McKinsey workflow redesign | |||||

| Workflow redesign | Changes how work moves through the business rather than adding AI on top of old steps | McKinsey identifies workflow redesign as one of the strongest contributors to bottom-line AI impact | Only automates the visible task and leaves handoffs/manual checks untouched | McKinsey State of AI 2025 | |||||

| Integration capability | Connects AI to the systems of record where work already happens | AI pilots stall when outputs are not embedded into operational systems | Requires staff to copy and paste between separate tools | McKinsey scaling practices | |||||

| Responsible AI governance | Defines human review | privacy | accountability | and risk controls before production | NZ businesses are expected to adopt AI in a trustworthy way under MBIE guidance | Treats governance as a policy document written after launch | MBIE Responsible AI guidance | ||

| Human-in-the-loop design | Specifies which AI decisions are human-verified and which can be automated | Dynatrace reports that human verification remains a core control layer for agentic AI | Promises full autonomy without oversight or monitoring | Dynatrace Pulse of Agentic AI 2026 | |||||

| KPI and ROI tracking | Measures time saved | error reduction | revenue lift | and adoption by workflow | McKinsey high performers track AI value through defined KPIs and enterprise-level impact | Reports usage metrics only | such as prompts run or seats activated | McKinsey State of AI 2025 | |

| Support model | Includes monitoring | iteration | retraining | and workflow support after launch | AI systems need ongoing ownership because processes | data | and risk conditions change | Ends at handover with no operating rhythm or improvement loop | Dynatrace and MBIE governance themes |

| NZ context | Understands NZ privacy | public guidance | business size | and adoption-first strategy | New Zealand's AI strategy prioritises private-sector adoption and practical productivity gains | Uses only overseas enterprise examples without adapting to NZ constraints | MBIE AI Strategy 2025 |

Original research by ManaTech

Frequently Asked Questions

What is an AI implementation partner?

An AI implementation partner designs and builds the operational system that makes AI useful inside a business. That can include workflow discovery, custom application development, data integration, agent design, automation, governance, staff enablement, monitoring, and ongoing optimisation after launch.

How is an AI implementation partner different from an AI consultant?

An AI consultant may produce a strategy, roadmap, or opportunity assessment. An AI implementation partner is accountable for turning that strategy into a working system. For growing businesses, the implementation gap is usually where value is won or lost.

What should be included in an AI implementation proposal?

A useful proposal should include the workflow being changed, target outcome, integrations required, data assumptions, human review points, risk controls, delivery phases, support model, pricing, and the KPIs used to judge success. If the proposal is mostly tool names, it is not specific enough.

How long does AI implementation take for a NZ business?

A narrow workflow automation can often be scoped and shipped in 4 to 8 weeks. A deeper operational system with multiple integrations, AI agents, dashboards, and governance controls usually takes 8 to 16 weeks. The timeline depends less on the model and more on data access, workflow complexity, and decision ownership.

Should a small business use off-the-shelf AI tools first?

Yes, for low-risk individual productivity tasks. But once AI touches client delivery, finance, operations, sales follow-up, compliance, or customer experience, the business needs integration and ownership. At that point, a custom workflow usually outperforms a stack of disconnected tools.

What should ManaTech build first for a business exploring AI?

The first build should target a bottleneck with measurable volume: invoice reconciliation, lead qualification, client onboarding, report generation, support triage, scheduling, quoting, or data entry. ManaTech scopes the workflow first, then builds the system around how the business actually operates.

Think You've Got It?

8 questions to test your understanding — instant feedback on every answer

Question 1 of 8

According to McKinsey's 2025 State of AI research, what is the main difference between AI experimentation and AI value capture?

Question 2 of 8

What does New Zealand's AI Strategy: Investing with Confidence prioritise?

Question 3 of 8

Which signal suggests an AI implementation partner is likely to build production value?

Question 4 of 8

Why does human-in-the-loop design matter for agentic AI?

Question 5 of 8

What is a weak signal during AI vendor selection?

Question 6 of 8

What should a NZ business ask about responsible AI before signing?

Question 7 of 8

Which metric best proves an AI implementation is working?

Question 8 of 8

What is the best first AI project for most growing businesses?

Related Content

Your Website Is Invisible to AI Search — Here Is How to Fix It

AI search engines like ChatGPT, Perplexity, and Gemini cannot read most business websites. If your site relies on client-side JavaScript rendering, you are invisible to the fastest-growing traffic source on the web.

Read more →blogAI Search Sends Businesses 10x More Revenue Per Click

AI-driven traffic accounts for less than 1% of visits but generates up to 11.4% of revenue. The data shows that every AI click is worth dramatically more than a traditional search click.

Read more →blogHow to Get Your Business Cited by ChatGPT

AI citations are the new backlinks. Branded web mentions correlate more strongly with AI visibility than traditional SEO signals. Here are 7 practical steps to get your business into AI answers.

Read more →Want to explore this topic further?

Book a free discovery call to discuss how ManaTech can help your business implement these ideas.

Book a Discovery Call